Employee relations is in the middle of its biggest shift in a decade. AI is taking over the repeatable parts of the job faster than most teams expected, and the parts that still require a human in the room are getting more valuable, not less.

This recap covers where AI already belongs in the ER workflow, where it absolutely shouldn't go, and what the new job description for modern ER leaders actually looks like.

The Shift From Case Management to Judgment Calls

Employee relations used to be measured by how many cases you closed. Intake, investigate, document, close. Rinse and repeat.

That frame is breaking down. AI can now pick up intake patterns, flag risk signals in minutes, and draft investigation summaries faster than most humans can. The scoreboard is changing. The work that matters most isn't how many tickets you processed. It's the decisions that only a human can make.

Where AI Is Already Pulling Its Weight

Three parts of the ER workflow have seen real productivity gains. Each one removes grunt work without touching the parts of the job that require discernment.

Intake and triage. Most ER teams are drowning in inbound. A significant chunk of what lands in the queue is noise, duplicates, or issues that belong with a different function. AI can read the report, check for prior history, categorize the concern, and route it before a human ever opens the ticket. That alone gives ER teams hours back every week.

Pattern detection. A single complaint about a manager looks like a one-off. Three complaints across six months that use similar language and describe similar behavior tells a very different story. Humans are bad at spotting those patterns across thousands of cases. AI is good at it. With modern case management, that kind of signal surfaces automatically instead of sitting buried in a spreadsheet.

Documentation. Investigation notes, interview summaries, and close-out reports eat enormous amounts of time. AI drafts give you an 80% starting point in seconds. The investigator edits, adds context, and signs off. Hours become minutes.

Where Human Judgment Still Wins

Here is where the line gets drawn clearly. These are the parts of ER work where handing off to AI creates real risk.

Investigating the messy human stuff. When a complaint involves retaliation, power dynamics, or genuinely conflicting accounts, pattern matching isn't enough. You need someone who can sit in a room (or a Zoom), read body language, ask the follow-up that wasn't scripted, and hold space for uncomfortable truths. No model does that well. It may never do it well.

Judgment on consequence. Determining what happens after an investigation is finished is not a classification problem. It's a judgment call that weighs culture, precedent, legal risk, the specifics of the people involved, and what the organization wants to stand for. That call has to sit with a person who is accountable for it.

Repairing relationships. Sometimes the outcome isn't termination. It's a hard conversation, a reset, a coaching plan, a rebuilt team dynamic. That is deeply human work. AI can prep you for the conversation. It can't have the conversation.

Reading context AI can't see. Policy exceptions exist for a reason. An ER lead who has been with the company for five years knows which manager is going through a divorce, which team just lost a beloved teammate, and which leader is under pressure from above. That context shapes the right response. No model sees any of it.

The New Shape of the ER Job

If AI handles intake, triage, pattern detection, and first-draft documentation, what's left? A lot. Just different work than before.

ER leaders spend less time processing cases and more time on the things that actually move the culture: coaching managers, building training programs, running proactive investigations into hot-spot teams, and partnering with legal and people ops on policy design. The function starts looking less like a help desk and more like an internal risk and culture practice.

Rebecca Taylor has written about the difference between HR and employee relations — a distinction that gets sharper when AI is in the mix. ER is no longer a subset of HR admin work. It's a specialized function with its own toolkit and its own KPIs.

The ER leaders who adapt fastest start measuring different things. Average time to resolution still matters, but so does recurrence rate, manager training completion, and proactive-to-reactive case ratio. If AI lets you do ten times more intake in the same time, but your recurrence rate goes up, you haven't actually improved anything. You've just processed more of the same problems.

What to Automate First (And What to Leave Alone)

A simple framework for ER teams evaluating AI today:

Automate confidently: intake forms, categorization, routing, initial risk scoring, duplicate detection, documentation drafts, policy lookup, historical pattern reporting, data anonymization for reports to leadership.

Use AI with a human in the loop: investigation planning, interview question generation, sentiment analysis on reporter communications, trend summaries for executive updates, training content personalization.

Keep fully human: final investigation conclusions, disciplinary decisions, accommodation approvals, terminations, reinstatements, public statements, any communication with a reporter about their specific case.

If you're unsure where something falls, the test is simple. If the decision involves accountability, ambiguity, or someone's livelihood, a person owns it. AI can assist. AI doesn't decide.

Risk, Bias, and the Questions HR Has to Ask

ER is often the only function in the room raising hard questions about AI. That is a feature, not a bug.

Three questions worth asking of any AI tool an ER team is considering:

What data was it trained on, and does that data reflect the people in your workforce? A model trained primarily on one population can produce skewed risk scores when applied to a different one. That is a legal and ethical problem, not just a technical one.

How are edge cases surfaced to a human? The worst-case scenario is an AI that quietly misclassifies a serious complaint as routine. Good systems are designed to escalate uncertainty, not hide it.

Who is accountable when the AI is wrong? This has to be answered in writing, not assumed. If an automated triage system deprioritizes a complaint that turns out to be serious, the organization is still on the hook. The workflow should make ownership explicit.

Those conversations can create friction with leaders who want to move faster. The friction is the point. ER exists to surface risk before it becomes a crisis, and the AI question is just a new version of a job the function has always done.

What Modern ER Teams Are Building Toward

The ER teams getting the most out of AI share a few patterns. They treat it as a force multiplier, not a replacement. They invest in training managers, because better managers prevent more cases than any system can resolve. They measure outcomes, not activity. And they stay close to the work, because even great AI still misses things a human in the room would catch.

The job of employee relations is getting harder and more important at the same time. AI is part of why. Used well, it frees ER leaders to do the strategic work that actually changes cultures. Used poorly, it creates a new layer of risk on top of the ones the function already manages.

The human judgment part isn't going anywhere. If anything, it's the most valuable thing the function has.

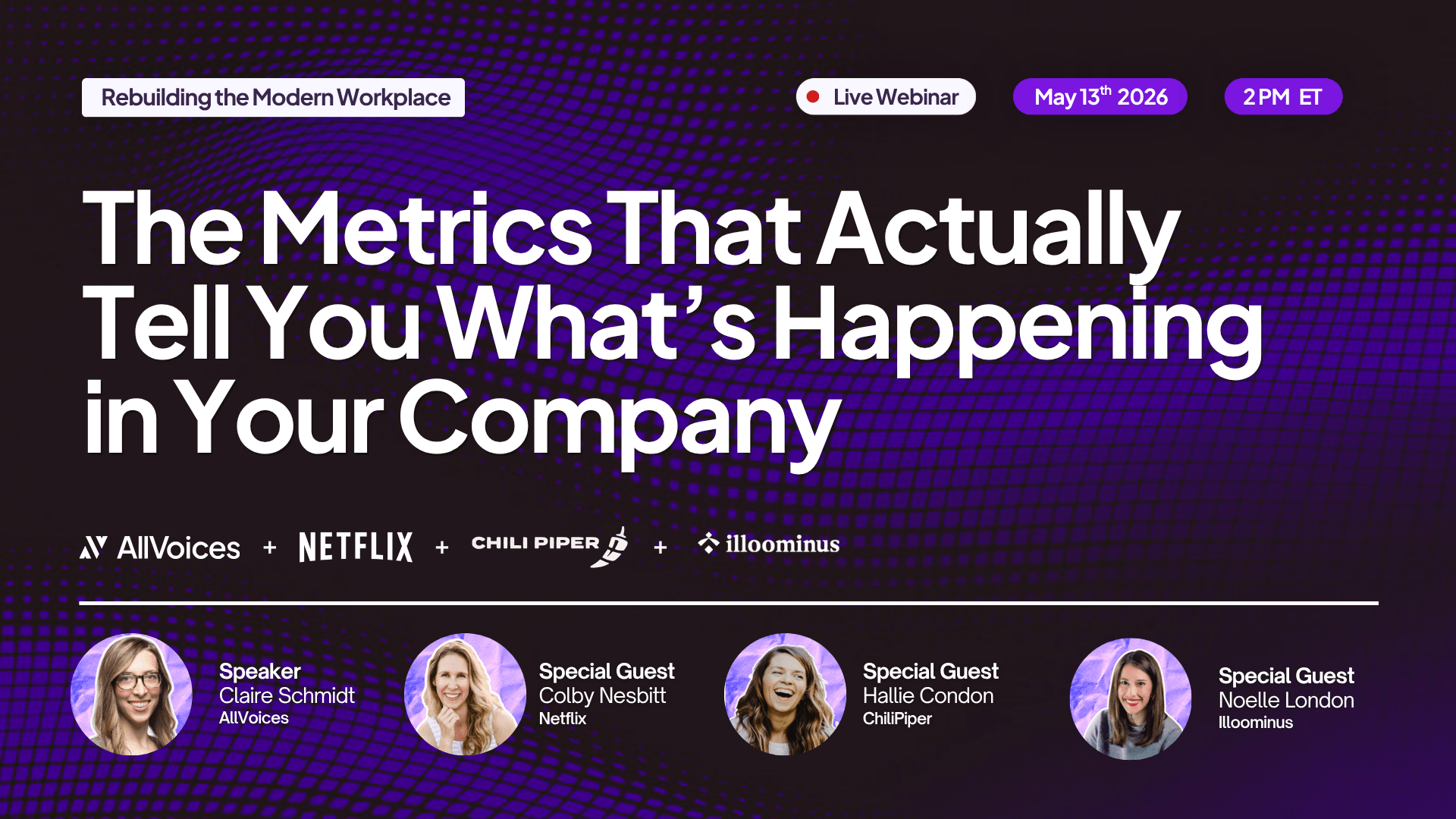

Want to see how AI-native employee relations works in practice? Book a demo with AllVoices and see how modern ER teams are running cases, spotting patterns, and keeping humans firmly in charge of the calls that matter.

AI in Employee Relations: Where Human Judgment Wins

Got more questions? Email us at support@allvoices.co and we'll respond ASAP.

Stay up to date on Employee Relations news

Sign up to our newsletter

.svg)

.svg)

Got more questions? Email us at support@allvoices.co and we'll respond ASAP.

.jpeg)